在算子性能测试时,框架打印的MS时间和实际不符, 导致性能与标杆比对

出现问题

// CPU time

struct timeval tp;

gettimeofday(&tp, NULL);

double iStart = ((double)tp.tv_sec + (double)tp.tv_usec * 1.e-6);

SparseFillEmptyRows(input_indice_addr, input_values_addr, input_default_values_addr, input_dense_shape_addr, 0,

input_indices_shapes_[0], dense_row, workspace_elements_per_rows_addr,

workspace_empty_rows_count_addr, workspace_row_indices_addr, workspace_input_row_ends_addr,

workspace_sorted_indices_addr, workspace_final_shape_addr, workspace_origin_index_addr,

workspace_sorted_key_addr, reinterpret_cast<cudaStream_t>(cuda_stream_), output_indices_addr,

output_values_addr, output_empty_row_indicator_addr, output_reverse_index_map_addr);

CHECK_CUDA_RET_WITH_EXCEPT_NOTRACE(

cudaMemcpyAsync(&real_output_size_, workspace_final_shape_addr, sizeof(int64_t), cudaMemcpyDeviceToHost,

reinterpret_cast<cudaStream_t>(cuda_stream_)),

"SparseFillEmptyRows cudaMemcpyAsync failed.");

cudaDeviceSynchronize(); // 同步函数

gettimeofday(&tp, NULL);

double iElaps = ((double)tp.tv_sec + (double)tp.tv_usec * 1.e-6) - iStart; //单位是second

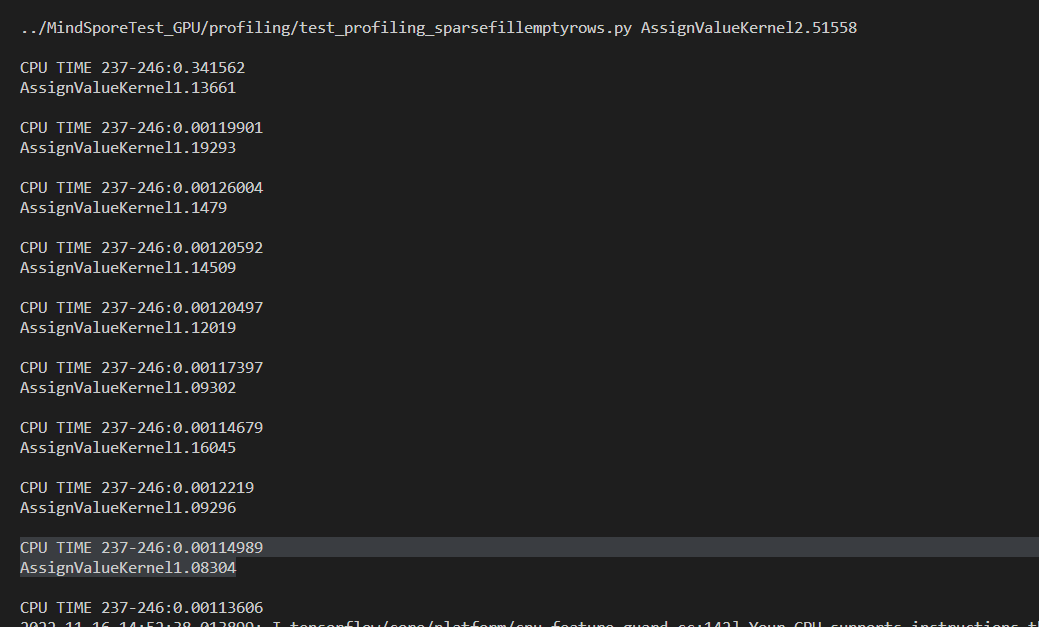

std::cout << "CPU TIME 237-246:" << iElaps * 1.e3 << std::endl;

// CPU time end

return true;

cudaEvent_t start, stop;

float elapsedTime = 0.0;

cudaEventCreate(&start);

cudaEventCreate(&stop);

cudaEventRecord(start, 0);

CopyRowIndiceKernel<<<CUDA_BLOCKS(device_id, indice_num), GET_THREADS, 0, cuda_stream>>>(indices_ptr, row_indices,

origin_index, indice_num);

cudaEventRecord(stop, 0);

cudaMemset(elements_per_rows, 0, dense_row * sizeof(int64_t));

CalElementPerRowsKernel<<<CUDA_BLOCKS(device_id, indice_num), GET_THREADS, 0, cuda_stream>>>(

dense_shape_ptr, row_indices, elements_per_rows, indice_num);

CalEmptyRowIndicatorKernel<<<CUDA_BLOCKS(device_id, dense_row), GET_THREADS, 0, cuda_stream>>>(

elements_per_rows, dense_row, output_empty_row_indicator_ptr);

InclusivePrefixSum(dense_row, elements_per_rows, input_row_ends, cuda_stream);

InclusiveBoolPrefixSum(dense_row, output_empty_row_indicator_ptr, empty_row_count_sum, cuda_stream);

RowsSort(indice_num, cuda_stream, dense_shape_ptr, row_indices, origin_index, sorted_key, sorted_indices, device_id);

AssignValueKernel<<<CUDA_BLOCKS(device_id, dense_row), GET_THREADS, 0, cuda_stream>>>(

values_ptr, indices_ptr, sorted_indices, dense_row, default_value, empty_row_count_sum, input_row_ends,

output_values_ptr, output_indices_ptr, indice_num, final_shape, output_reverse_index_map_ptr);

cudaEventRecord(stop, 0);

cudaEventSynchronize(stop);

cudaEventElapsedTime(&elapsedTime, start, stop);

std::cout << "AssignValueKernel" << elapsedTime << std::endl;

cudaEventDestroy(start);

cudaEventDestroy(stop);

std::cout << std::endl;

Ascend/GPU/CPU) / 硬件环境:Please delete the backend not involved / 请删除不涉及的后端:

/device GPU

Software Environment / 软件环境 (Mandatory / 必填):

-- MindSpore version (e.g., 1.7.0.Bxxx) :

-- Python version (e.g., Python 3.7.5) :

-- OS platform and distribution (e.g., Linux Ubuntu 16.04):

-- GCC/Compiler version (if compiled from source):

Excute Mode / 执行模式 (Mandatory / 必填)(PyNative/Graph):

Please delete the mode not involved / 请删除不涉及的模式:

/mode pynative

/mode graph

# get random data.

def gen_data_file(shape, dtype, rand_type, low, high):

if rand_type == "randint":

rand_data = np.random.randint(low, high, size=shape)

else:

rand_data = np.random.uniform(low, high, size=shape)

data = np.array(rand_data, dtype=dtype)

return data

# generate dense_shape, limit_cnt

def get_rand_dense():

dense_x = random.randint(1, 1000)

dense_y = random.randint(1, 1000)

limit = dense_x*dense_y

return dense_x, dense_y, limit

# 生成规定cnt数目, 在limit_x,limit_y范围内的indices 并且不重复。

def rand_index(cnt, limit_x, limit_y):

res = set()

for i in range(cnt):

while True:

x = random.randint(0, limit_x - 1)

y = random.randint(0, limit_y - 1)

if (x, y) in res:

continue

res.add((x, y))

break

return Tensor(list(res))

'''

dense 2000W, values number=10W

'''

def test_sfer_2000x10000_performance():

indices = rand_index(100000, 10000, 2000)

values =Tensor(np.array(np.random.randn(100000)),dtype=ms.float32)

default_value = Tensor(np.random.randn(),dtype = ms.float32)

dense = Tensor([10000, 2000], dtype=ms.int64)

fact = SparseFillEmptyRowsMock(inputs=[indices, values, dense, default_value])

fact.forward_profile_cmp()

第一个代码块是gpu kernel中的Launch Kernel函数,将cuda函数包裹起来进行CPU时间测试。

第二个代码块是CUDA的主函数,将cuda所有流程包裹起来进行CUDA时间测试。

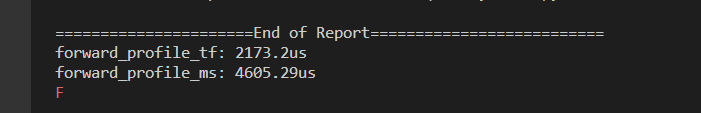

和性能测试输出的时间(单位:微秒)不相符。

此处可能存在不合适展示的内容,页面不予展示。您可通过相关编辑功能自查并修改。

如您确认内容无涉及 不当用语 / 纯广告导流 / 暴力 / 低俗色情 / 侵权 / 盗版 / 虚假 / 无价值内容或违法国家有关法律法规的内容,可点击提交进行申诉,我们将尽快为您处理。

Please add labels (comp or sig), also you can visit https://gitee.com/mindspore/community/blob/master/sigs/dx/docs/labels.md to find more.

为了让代码尽快被审核,请您为Pull Request打上 组件(comp)或兴趣组(sig) 标签,打上标签的PR可直接推送给责任人进行审核。

更多的标签可以查看https://gitee.com/mindspore/community/blob/master/sigs/dx/docs/labels.md

以组件相关代码提交为例,如果你提交的是data组件代码,你可以这样评论:

//comp/data

当然你也可以邀请data SIG组来审核代码,可以这样写:

//sig/data

另外你还可以给这个PR标记类型,例如是bugfix或者是特性需求:

//kind/bug or //kind/feature

恭喜你,你已经学会了使用命令来打标签,接下来就在下面的评论里打上标签吧!

Appearance & Root Cause

算子实例有多个,分布在不同的图上,导致算子次数统计有误

Fix Solution

按类型重新统计算子次数

Fix Description & Test Suggestion

详见:https://e.gitee.com/mind_spore/repos/mindspore/mindspore/pulls/45686

Self-test Report

自验通过,可根据最新的MS包验证

登录 后才可以发表评论